By Michelle McKinney, MBA, CSM | CEO and Founder, TransformXperience LLC

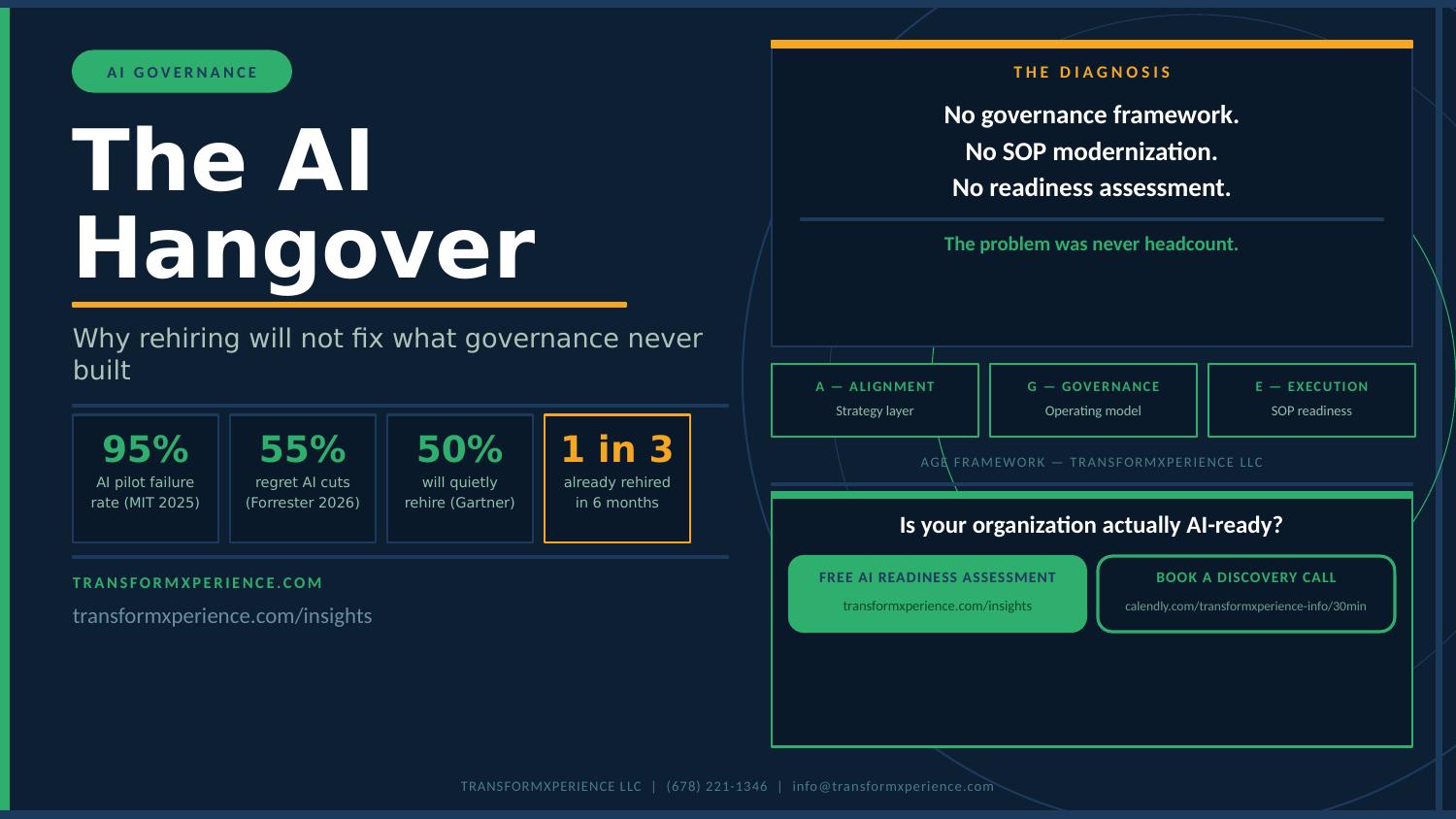

Over the past several weeks on The Daily Byte, we have been talking about the AI Hangover. The cuts after overspending on AI pilots that produced nothing. The rehiring wave that is now in full swing across industries. The slow, expensive realization that deploying AI without governance is not innovation. It is a liability.

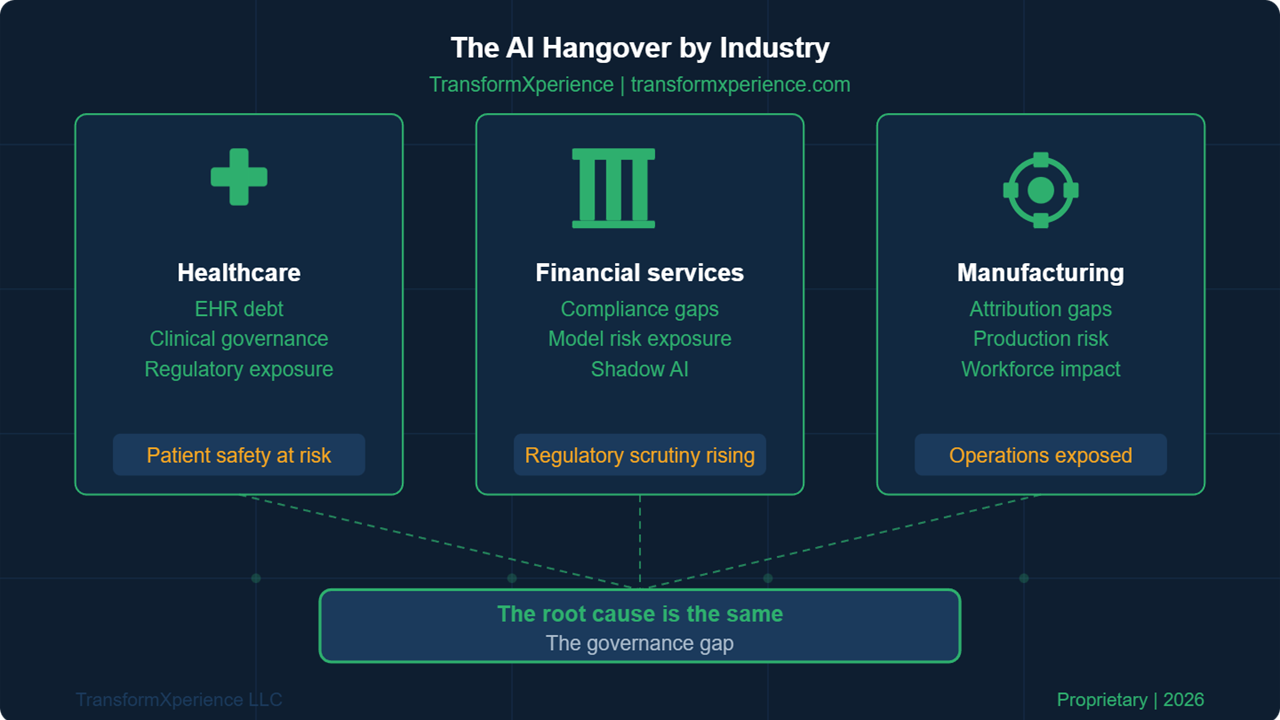

What I want to talk about today is something that gets lost in those broad conversations. The AI Hangover does not look the same everywhere. In a hospital system, it looks like compromised patient data and regulatory exposure. In a financial services firm, it looks like model risk violations and compliance gaps. In a manufacturing operation, it looks like production anomalies and quality control failures that nobody can trace back to the AI making the decision.

The symptoms are different. The stakes are different. The regulatory environments are different. But when you pull back the surface layer in every one of these industries, you find the same thing underneath. A governance gap. Decisions being made by AI systems that no one owns. Outputs being trusted by teams that have no framework for validating them. Risk accumulating in silence because no one built the operating structure to catch it.

That is what this post is about. Three industries. Three versions of the same hangover. And one conversation every executive in each of them needs to have before the next AI investment gets approved.

| THE PATTERN Organizations that invested heavily in AI between 2022 and 2024 are now in one of two places. They are either quietly managing the fallout from governance failures they have not publicly disclosed, or they are actively rebuilding the operating structures they skipped the first time around. Either way, they are paying twice. |

Healthcare: When AI Fails, Patients Feel It First

Healthcare organizations moved fast on AI. Predictive patient risk scoring. AI-assisted diagnostic imaging. Automated prior authorization. Clinical decision support tools embedded directly into electronic health record workflows. The use cases were compelling and the vendor pitches were persuasive.

What most healthcare organizations did not build was the governance layer that makes any of those tools safe to operate at scale. And in healthcare, the cost of that omission is not just financial. It is clinical.

What the AI Hangover Looks Like in Healthcare

The most common pattern we see is what I call inherited AI risk. A health system signed a contract with a vendor whose platform uses AI to make recommendations. The clinical team uses the recommendations. Nobody on the health system side owns the process of validating those recommendations, monitoring for model drift, or catching the moment the AI starts producing outputs that no longer reflect current patient populations or updated clinical evidence.

The regulatory exposure in this scenario is significant. Protected health information processed by third-party AI systems requires governance documentation that most organizations cannot produce on demand. Audit readiness for AI-assisted clinical decisions is not a theoretical concern. It is a current regulatory expectation in several states and a near-term federal priority.

Beyond compliance, there is a clinical governance gap. Who in the organization is responsible for reviewing AI-assisted decisions that led to adverse outcomes? Who has the authority to pause an AI-assisted workflow when results become unreliable? In most health systems, the honest answer is nobody. The AI runs. People trust it. And the accountability sits in a vacuum.

| HEALTHCARE REALITY CHECK If your organization cannot answer these three questions, your AI governance posture is a liability: Who owns the process of validating AI-assisted clinical recommendations on an ongoing basis? What is the escalation path when an AI output is flagged as potentially incorrect? Where does your AI governance documentation live, and when was it last reviewed by your compliance team? |

The good news for healthcare organizations is that you are not starting from zero. HIPAA compliance infrastructure, clinical quality management processes, and existing IT governance frameworks are all assets that can be extended to cover AI. The missing piece in most health systems is not the will to govern AI. It is the operating model that makes governance continuous rather than periodic.

For a deeper look at the specific EHR implementation challenges that created this environment, read our post on EHR Implementation Problems and the governance gaps that accompany them.

Financial Services: Compliance Without a Net

Financial services firms operate in one of the most heavily regulated environments for AI in the world. Model risk management guidance from banking regulators has been in place for years. Fair lending requirements, explainability obligations, and third-party vendor risk frameworks all apply to AI systems used in credit decisioning, fraud detection, and customer-facing automation.

And yet, the AI Hangover is real in financial services. It just shows up differently than it does in healthcare.

What the AI Hangover Looks Like in Financial Services

The pattern in financial services is what I call governance theater. Organizations have the documentation. They have the policies. They can produce an AI governance framework on request. What they cannot demonstrate is that anyone is operating that framework on a daily basis. The policies exist in documents. The governance exists on paper. The AI runs in production with no one watching the output in any systematic way.

This is not a compliance problem. It is an operational discipline problem. And the regulatory environment is catching up to it. Examiners are no longer satisfied with policy documents. They want to see evidence of ongoing monitoring, documented exception handling, and demonstrated accountability for model outputs. Organizations that cannot show that evidence are discovering that their governance theater is not providing the protection they thought it was.

There is also a technology layer to the financial services AI Hangover that other industries do not face at the same scale. AI in financial services is not always visible. Fraud detection models, credit scoring supplements, customer segmentation algorithms, and behavioral risk indicators are embedded in platforms that business teams use every day without understanding that AI is making decisions underneath. Shadow AI in financial services is not just employees using unauthorized tools. It is AI baked into approved vendor platforms that nobody has formally governed.

| FINANCIAL SERVICES REALITY CHECK Regulatory examiners are asking three questions your AI governance program needs to answer: Can you demonstrate that someone owns the ongoing monitoring of every AI model in production? Can you produce documentation of a model output exception that was caught, escalated, and resolved in the last 90 days? Can you show how your AI governance framework was updated in response to a regulatory guidance change in the past 12 months? |

The financial services organizations that are recovering from the AI Hangover fastest are the ones that stopped treating governance as a compliance function and started treating it as an operating discipline. The distinction matters. Compliance functions produce documents. Operating disciplines produce behavior. Governing AI requires behavior, not just documentation.

For more on the cybersecurity and strategic risk dimensions of AI governance in financial services, see our post on Cybersecurity as a Strategic Enabler.

Manufacturing: When the Line Stops, AI Gets the Blame

Manufacturing organizations have been adopting AI for predictive maintenance, quality control automation, supply chain optimization, and production scheduling. The efficiency gains are real. The governance gaps are equally real, and in manufacturing the consequences of those gaps have physical dimensions that other industries do not face.

When an AI-driven quality control system misses a defect, the defect ships. When a predictive maintenance model fails to flag equipment degradation, the equipment fails. When a production scheduling algorithm optimizes for throughput in a way that creates bottleneck risk downstream, the impact shows up on the floor before anyone in IT knows there is a problem.

What the AI Hangover Looks Like in Manufacturing

The manufacturing AI Hangover often starts with what I call the attribution gap. Something goes wrong in a production environment. Leadership wants to know why. The investigation reveals that an AI system was influencing the decision or the process where the failure occurred. But nobody can explain exactly how the AI made that decision, whether the AI was operating within its intended parameters, or whether the governance framework would have caught the problem if someone had been watching.

The attribution gap creates organizational friction that is expensive beyond the immediate incident. Once a production team loses confidence in an AI system, they stop using it. The investment goes dormant. The vendor gets blamed. And the organization cycles back to manual processes that are slower and more expensive than the AI was, while leadership tries to figure out why the AI project did not deliver.

The root cause is almost never the AI. It is the absence of the governance structure that would have kept the AI operating within safe parameters, flagged the drift before it became a problem, and given the production team the confidence that someone with authority was watching the system on their behalf.

There is also a workforce dimension to the manufacturing AI Hangover that deserves direct attention. AI-driven automation is changing roles faster than most organizations are managing the transition. Workers whose jobs have been modified or displaced by AI systems that were not properly introduced, explained, or governed are not a productivity problem. They are a governance problem. The human side of AI deployment is part of the operating model, and organizations that skipped it are paying for it now in retention, morale, and productivity.

| MANUFACTURING REALITY CHECK Three questions that reveal your AI governance posture in manufacturing: If an AI-assisted quality control decision led to a recall today, could you produce a complete audit trail of how the decision was made and who was responsible for monitoring it? What is your process for revalidating an AI model after a significant change in raw materials, production volume, or equipment configuration? Who in your organization has the authority to suspend an AI-assisted production process, and what triggers that decision? |

For manufacturing organizations wrestling with the legacy systems that AI is often layered on top of, our post on Beyond Legacy: Modernizing Your IT Backbone addresses the infrastructure dimension of AI readiness in operational environments.

The Root Cause Every Industry Shares

Three industries. Three sets of symptoms. One underlying condition.

In every case, the AI Hangover traces back to the same governance failure. Organizations deployed AI into operating environments without building the continuous governance structure that makes AI sustainable. They treated governance as a project deliverable rather than an operating discipline. They confused having a policy with having a program. And they discovered, at significant cost, that AI without governance is not a technology problem. It is a leadership problem.

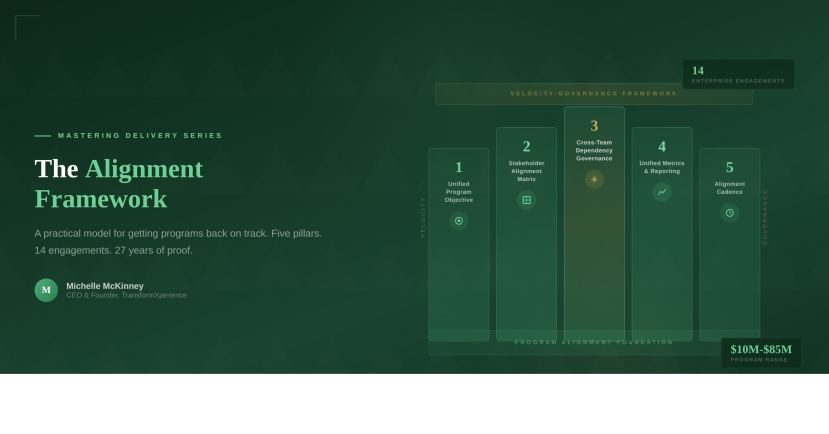

The AGE Framework, our proprietary Adaptive Governance Engine, was designed specifically to diagnose this condition and build the operating structures that prevent it. AGE measures governance maturity across three dimensions: Adaptive, which examines whether your AI strategy is aligned to defined business objectives across leadership, operations, and technology. Governance, which examines whether you have the operating structure to own AI decisions, validate outputs, and course-correct when systems fail. And Execution, which examines whether your processes, data infrastructure, and change management capabilities are ready to support AI at production scale.

What AGE consistently reveals across healthcare, financial services, and manufacturing is the same pattern. Organizations score strongest on Execution because they have the tools and the people. They score weakest on Alignment and Governance because they skipped the operating structure. That imbalance is the AI Hangover. And it is recoverable.

| THE RECOVERY PATH Organizations that recover from the AI Hangover fastest share three characteristics. They stop treating governance as a one-time project and start treating it as a permanent operating discipline. They assign explicit ownership for AI governance at the operational level, not just the policy level. And they measure governance health continuously rather than relying on periodic audits to tell them what has already gone wrong. |

What Leaders Should Do This Week

Regardless of your industry, there are three actions that move the needle immediately.

First, complete an honest inventory of every AI system currently in production in your organization. Not the systems you approved. The systems that are actually running and making decisions. Shadow AI, vendor-embedded AI, and department-level AI tool adoption are all part of this inventory. You cannot govern what you have not mapped.

Second, identify who owns each system operationally. Not who signed the contract. Who is responsible for monitoring the output, validating the results, and escalating when something goes wrong. If that owner does not exist for a given system, that system is ungoverned. Document the gap.

Third, assess your governance readiness using a structured framework. Our AI Readiness Self-Assessment is available on the Insights page at transformxperience.com and covers the foundational dimensions that determine whether your organization is positioned to govern AI as a permanent operating discipline.

The AI Hangover is real in every industry. The organizations that come out of it stronger are the ones that use the recovery as an opportunity to build the governance infrastructure they skipped the first time. That is not a technology investment. It is a leadership decision.

About the Author

Michelle McKinney, MBA, CSM

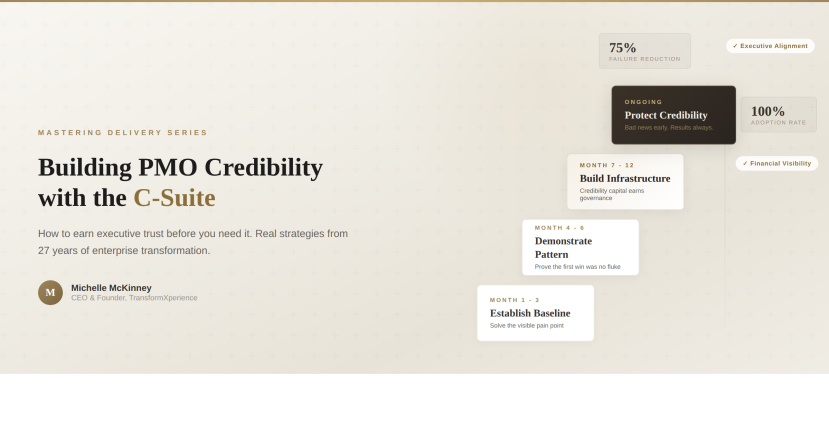

Michelle McKinney is the CEO and Founder of TransformXperience LLC, an AI-powered IT consulting firm based in Atlanta, Georgia. With 44 years of technology leadership experience spanning Fortune 500 enterprises, Big 4 consulting, and government sectors, Michelle built TransformXperience on a single principle: organizations should own their transformation, not rent it. She is the creator of the AGE Framework (Adaptive Governance Engine), the Velocity-Governance Framework, and the TransformXperience Delivery Method. TransformXperience serves healthcare, financial services, and manufacturing organizations navigating AI readiness, PMO modernization, and SOP transformation.