There is a moment in every AI implementation where the organization exhales. The model is deployed. The dashboards are live. The executive sponsor sends a congratulatory email. The project team moves on to the next initiative.

That exhale is the most dangerous moment in the entire AI lifecycle.

Because AI does not stop working when your project closes. It keeps learning. It keeps processing. It keeps making decisions, or informing the decisions your people make. And without continuous governance, it keeps drifting.

Model drift. Data drift. Concept drift. These are not theoretical risks. They are operational realities that affect every AI system in production. A model that was 94% accurate at deployment can quietly degrade to 72% accuracy over six months as the underlying data distribution shifts. And here is the part that should concern every executive reading this: the model will not tell you it is wrong. It will continue delivering answers with the same confidence it had on day one.

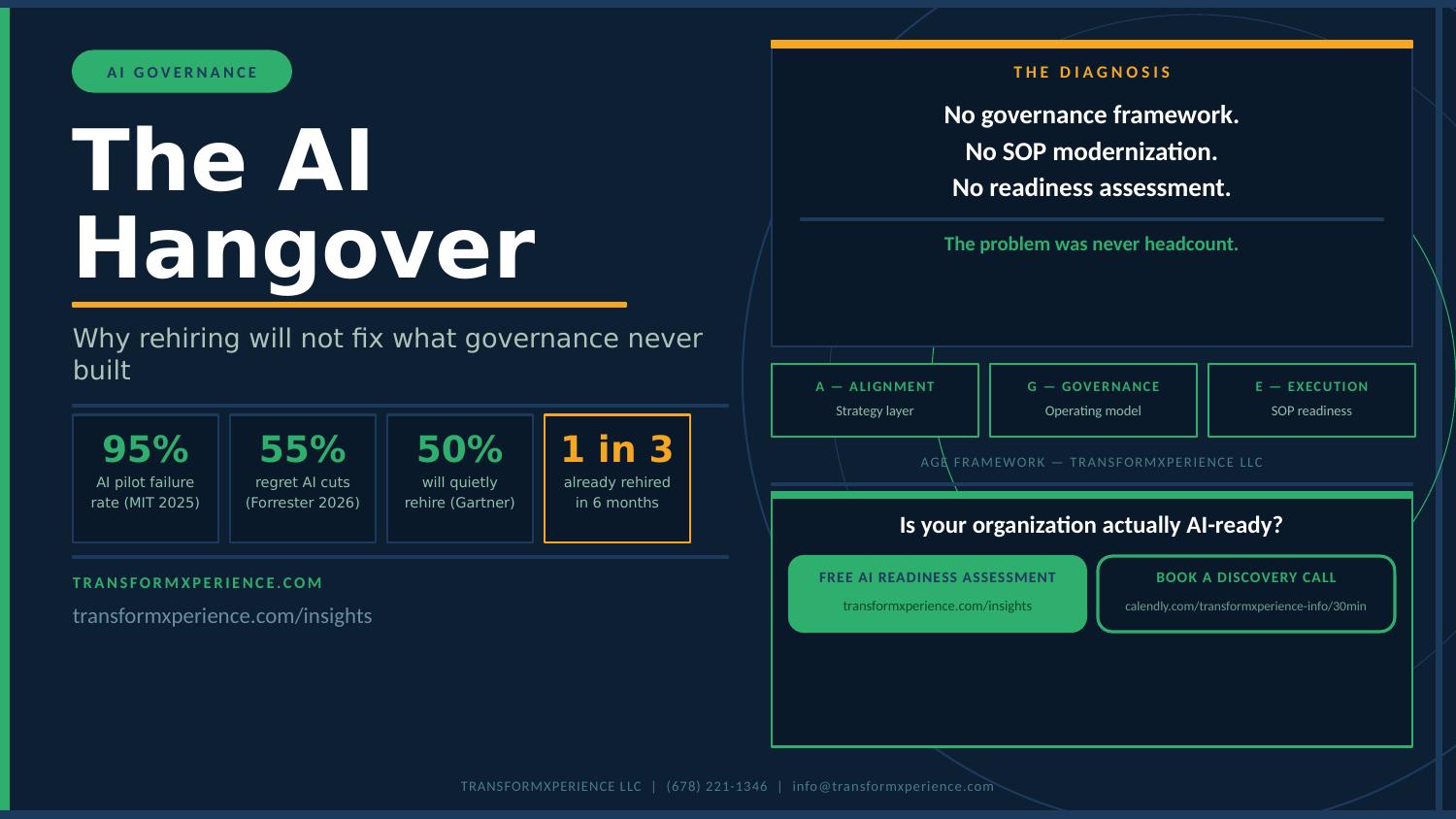

Nobody is talking about this. The entire industry conversation is focused on getting AI implemented. Getting the pilot to production. Getting the business case approved. Getting the data cleaned up. And all of that matters. But it only matters if you also prepare for what happens after.

The Continuous Improvement Imperative

Once you implement AI, four things must become continuous, not periodic, not annual, continuous:

1. Standards Must Evolve

The standards you set at deployment are based on what you knew at deployment. Six months later, you know more. The model has revealed edge cases nobody anticipated. Regulatory requirements have shifted. Industry benchmarks have been updated. Your AI governance standards must evolve with them.

This means your PMO needs a mechanism for reviewing and updating AI governance standards on a defined cadence. Not when someone remembers. Not when something breaks. On a schedule, with accountability, with documented outcomes.

2. Learnings Must Feed Back Into the System

Every AI model in production generates learnings. Performance data. Error patterns. User feedback. Edge cases. Business context changes. These learnings are gold, but only if they feed back into the system.

Most organizations treat model deployment like a handoff. The project team builds it, the operations team runs it. But the learnings that come from running it need to inform the next intake decision, the next data quality standard, the next model design. The PMO is the governance layer that ensures this feedback loop exists and operates.

3. Processes Must Adapt

The processes you designed at launch were designed for a specific set of conditions. As AI models evolve, as data sources change, as business requirements shift, those processes need to adapt. Escalation paths that made sense at deployment may not account for new failure modes. Approval workflows may need additional checkpoints as model complexity increases.

This is not about throwing out your processes every quarter. It is about building adaptability into the process design itself. Review triggers. Threshold-based escalations. Automated monitoring that flags when processes are no longer aligned with model behavior.

4. Data Governance Becomes Permanent

An AI-ready PMO builds data governance into the project lifecycle from intake to deployment. That means master data management standards are part of the project charter. Data quality checkpoints are built into every stage gate. Data lineage and provenance are tracked as deliverables, not afterthoughts. And model performance monitoring is included in the operational handoff.

The PMO does not need to do the technical data work. But it needs to ensure the governance framework exists and is followed. Without that, you are building AI on an unreliable foundation, and you will not know it until the model gives you wrong answers with confidence.

What This Looks Like in Practice

Here is what continuous AI governance looks like when a PMO gets it right:

Monthly model performance reviews are scheduled and tracked as governance deliverables. Accuracy, precision, recall, and business impact metrics are reviewed against baseline thresholds. Deviations trigger defined response protocols.

Quarterly data governance audits validate that training data sources remain reliable, that data lineage documentation is current, and that new data sources are evaluated against quality standards before being incorporated into model retraining.

Semi-annual SOP reviews ensure that standard operating procedures reflect the current state of AI operations. Intake criteria, escalation paths, approval workflows, and handoff procedures are updated based on accumulated learnings.

Continuous feedback loops between model operators, business users, and PMO governance ensure that insights from production operations inform future AI initiatives. The PMO tracks these learnings as organizational assets, not project artifacts.

Retraining triggers and decommissioning protocols are defined before deployment. When a model falls below performance thresholds, the response is predetermined, not reactive. When a model is no longer viable, there is a documented process for retiring it safely.

None of this is technically complex. It is operationally disciplined. And that is exactly what a PMO is built to provide.

The PMO’s Evolving Role

Traditional PMOs think in terms of projects with a beginning, middle, and end. Initiation. Planning. Execution. Closure. The project is delivered. The team is reassigned. The PMO moves on.

AI does not work that way. There is no closure phase. There is deployment, and then there is continuous operation, continuous monitoring, continuous improvement. The PMO that governs AI initiatives must evolve from a delivery organization to a continuous governance organization.

This does not mean the PMO becomes an AI operations team. It means the PMO ensures that AI operations are governed with the same discipline it applies to project delivery. Stage gates do not end at deployment. They extend into production. Governance checkpoints are not just for project milestones. They are for operational milestones. Risk management is not just about delivery risk. It is about operational risk, model risk, data risk, and adoption risk.

This is a fundamental shift in how PMOs operate. And the organizations that make this shift will be the ones that actually realize the ROI their AI business cases promised.

The Cost of Getting This Wrong

When organizations deploy AI without continuous governance, the consequences are predictable:

- Models degrade silently, producing increasingly inaccurate outputs that inform business decisions for months before anyone notices

- Data quality issues compound over time, with each retraining cycle potentially introducing new biases or errors that were not present at launch

- Regulatory compliance gaps emerge as requirements evolve and governance frameworks remain static

- Organizational trust in AI erodes when models produce unexpected results, making future AI adoption harder even when the technology is sound

- ROI projections fail to materialize because the operational discipline needed to sustain model performance was never established

The irony is that organizations invest millions in getting AI to production and then let it degrade because they did not invest in the governance required to sustain it. The implementation was the easy part. The hard part is what comes after.

Preparing Your PMO for Continuous AI Governance

If your organization is deploying AI or planning to, here are five questions to ask right now:

- Who owns model performance after deployment, and how is that performance tracked and reported?

- What triggers a model retraining cycle, and who has the authority to initiate one?

- Are your SOPs updated to reflect AI-specific governance requirements, or are you still operating on pre-AI procedures?

- Does your PMO governance framework extend beyond project delivery into ongoing AI operations?

- How are learnings from AI operations feeding back into your intake process, your data governance standards, and your capability development?

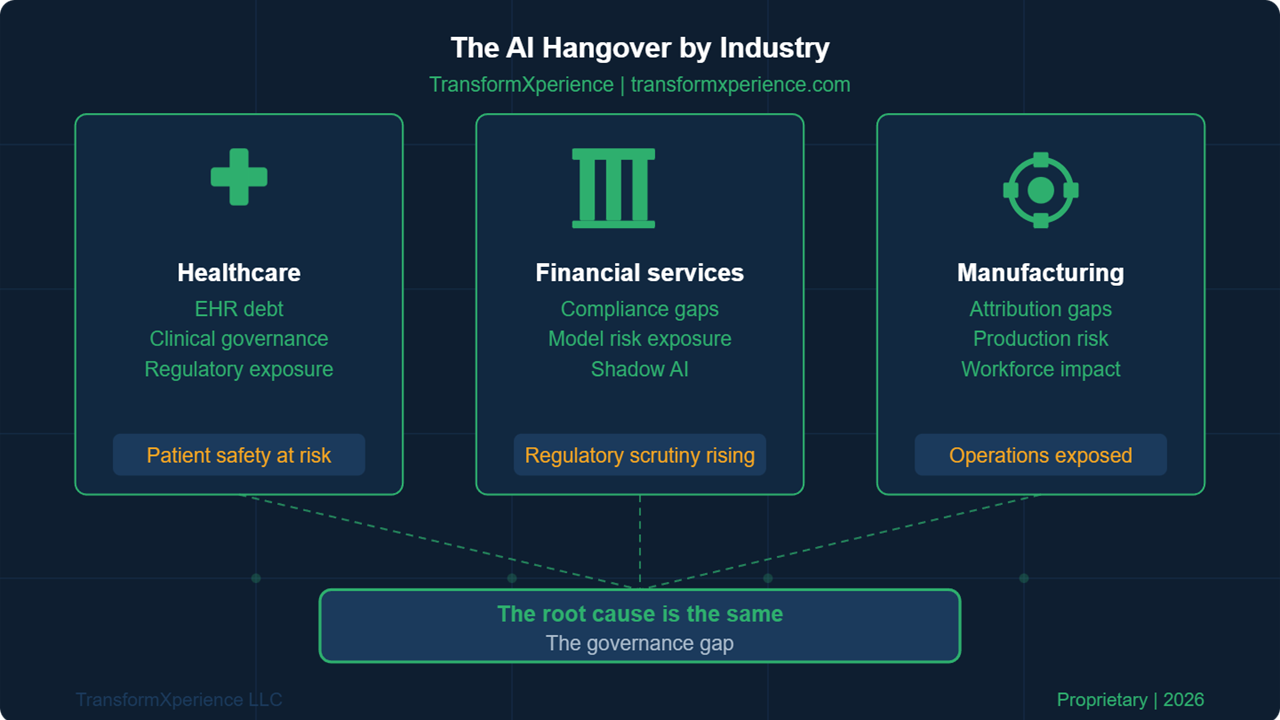

If you cannot answer these questions clearly, you have a governance gap that will undermine your AI investment over time.

AI implementation is not a project with an end date. It is a permanent operational commitment that requires continuous improvement in your standards, your learnings, your processes, and your data. The PMO is the organizational mechanism built to provide that discipline. But only if it evolves to meet the challenge.

Take the Next Step

TransformXperience helps mid-market organizations build AI-ready PMOs that govern, measure, and continuously improve AI operations. Our SOP Modernization offering ensures your procedures keep pace with your AI ambitions.

Service Offering Expansion: Continuous AI Governance

The following outlines how the continuous AI governance concept can be developed into a service offering for TransformXperience. This builds on the SOP Modernization offering and extends it into post-deployment governance.

Service Concept

Continuous AI Governance Program

A structured, ongoing engagement that provides organizations with the governance discipline required to sustain AI performance after deployment. This is not consulting. This is operational governance infrastructure that your team owns and operates, with TransformXperience providing the framework, training, and periodic review.

How It Connects to Existing Offerings

|

Existing Offering |

Connection Point |

Expansion |

|

SOP Modernization |

SOPs require continuous updates as AI operations evolve |

Quarterly SOP review cycles become part of Continuous AI Governance |

|

PMO Modernization |

PMOs must extend governance beyond project delivery |

Post-deployment governance framework embedded in PMO operating model |

|

Data Analytics & BI |

Model performance monitoring requires analytics infrastructure |

Governance dashboards and automated threshold alerts |

|

Process Optimization |

AI processes must adapt as models and data change |

Continuous process adaptation cycles tied to model performance data |

Engagement Structure

|

Component |

Details |

|

Initial Setup |

Establish governance framework, define performance thresholds, create monitoring protocols, update SOPs, and train teams. 4-6 weeks. |

|

Monthly Model Reviews |

Structured review of model performance metrics against baselines. Deviation analysis and response recommendations. |

|

Quarterly Data Audits |

Data source validation, lineage review, quality assessment, and bias detection across all AI systems in production. |

|

Semi-Annual SOP Updates |

Review and update all AI-related SOPs based on accumulated learnings, regulatory changes, and operational feedback. |

|

Annual Governance Assessment |

Comprehensive assessment of AI governance maturity with updated roadmap and capability recommendations. |

|

Continuous Feedback Integration |

Ongoing mechanism for capturing and routing operational learnings back into intake, design, and governance standards. |

Revenue Model

|

Engagement Tier |

Scope |

Investment Range |

|

Setup Only |

Framework + training, client self-manages |

$25,000 – $40,000 |

|

Setup + Quarterly Reviews |

Framework + quarterly governance reviews |

$40,000 setup + $10,000/quarter |

|

Full Continuous Governance |

All components, ongoing partnership |

$40,000 setup + $5,000 – $8,000/month |

Strategic Value

This offering creates recurring revenue that compounds with each client. Every SOP Modernization engagement becomes a natural lead into Continuous AI Governance. Every PMO transformation client needs post-deployment governance. The content-to-pipeline flow is:

- Daily Byte episodes on AI governance and continuous improvement seed the concept (awareness)

- This blog post deepens the message and introduces the governance gap (education)

- The AI Readiness Assessment reveals the gap in the prospect’s organization (qualification)

- SOP Modernization closes the immediate procedural gaps (initial engagement)

- Continuous AI Governance provides the ongoing discipline to sustain results (recurring revenue)

This is the full lifecycle play. You are not just selling a project. You are building a long-term governance partnership that grows as the client’s AI maturity grows.